Combining vision and touch sensors with neuromorphic computing can result in robots that outperform humans in dexterity and acuity.

Robots with a keen sense of both sight and touch: that is what members of the Intel Neuromorphic Research Community (INRC) hope can improve the capabilities of robotic process automation.

Most of today’s robots operate solely on visual processing. Enabling a human-like sense of touch in robotics could significantly improve current functionality and even lead to new use cases.

For example, robotic arms fitted with artificial skin could easily adapt to changes in the quality of manufactured goods on the production line. The ability of a mechanical arm to feel and perceive surroundings by touch could also allow for closer and safer human-robotic interaction, such as in the caregiving professions, or in improving accuracy in surgical tasks.

Artificial skin, neuromorphic logic

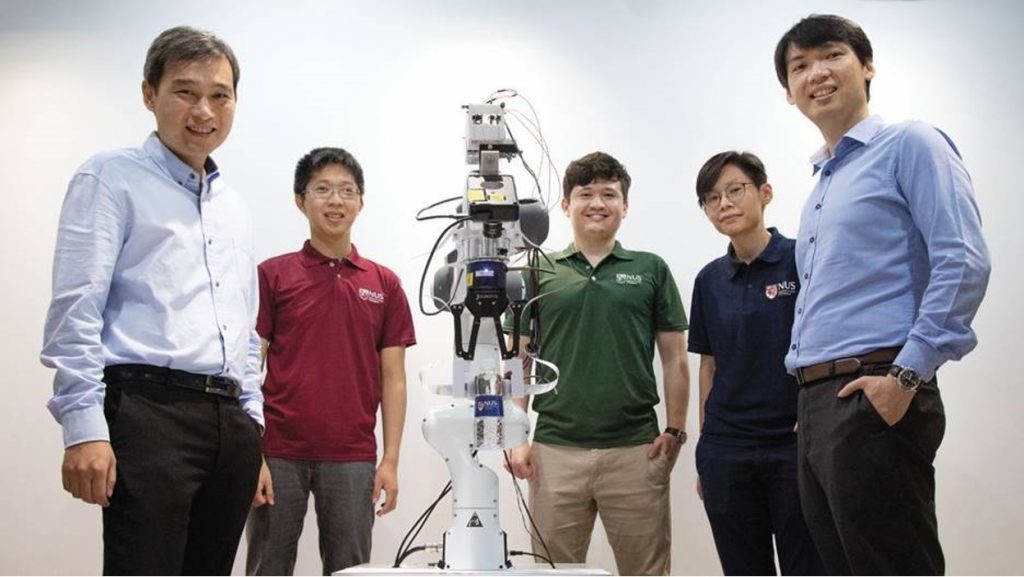

Two researchers at Singapore’s National University of Singapore recently demonstrated the promise of event-based vision and touch sensing in combination with Intel’s neuromorphic processing for robotics.

Their recently developed artificial skin can detect touch more than 1,000 times faster than the human sensory nervous system. It can also identify the shape, texture and hardness of objects 10 times faster than the blink of an eye.

While the creation of artificial skin is one step in bringing this vision to life, it also requires a chip that can draw accurate conclusions based on the skin’s sensory data in real time, while operating at a power level efficient enough to be deployed directly inside the robot. Said assistant professor Benjamin Tee of the NUS Department of Materials Science and Engineering and NUS Institute for Health Innovation & Technology: “Making an ultra-fast artificial skin sensor solves about half the puzzle of making robots smarter. It also needs an artificial brain that can ultimately achieve perception and learning as another critical piece in the puzzle. Our unique demonstration of an AI skin system with neuromorphic chips such as the Intel Loihi provides a major step forward towards power-efficiency and scalability.”

Touching on Neuromophic technology

A neuromorphic chip is any integrated circuit that attempts to imitate the function of a biological brain by mimicking things such as cranial nerves, neurons, and synapses. It can be used to process sensory data from sensors such as those used to emulate the sense of touch in artificial skin.

In their initial experiment, the NUS researchers used a robotic hand fitted with the artificial skin to read Braille, passing the tactile data to Loihi through the cloud to convert the micro bumps felt by the hand into a semantic meaning. Loihi achieved over an accuracy rate of 92% in identifying the Braille letters, while using 20 times less power than a standard Von Neumann processor.

Will neuromorphics drive the future of robotics?

Building on this work, the NUS team further improved robotic perception capabilities by combining both vision and touch data in a spiking neural network (SNN). By combining event-based vision and touch using a spiking neural network, accuracy in object classification was increased by 10% compared to a vision-only system. Moreover, the team demonstrated the promise for neuromorphic technology to power such robotic devices, with Loihi processing the sensory data 21% faster than a top-performing graphics processing unit, while using 45 times less power.

Assistant professor Harold Soh from the Department of Computer Science at the NUS School of Computing said: “The (results) show that a neuromorphic system is a promising piece of the puzzle for combining multiple sensors to improve robot perception. It’s a step toward building power-efficient and trustworthy robots that can respond quickly and appropriately in unexpected situations.”

According to Mike Davies, director of Intel’s Neuromorphic Computing Lab: “This research provides a compelling glimpse to the future of robotics where information is both sensed and processed in an event-driven manner combining multiple modalities. The work adds to a growing body of results showing that neuromorphic computing can deliver significant gains in latency and power consumption once the entire system is re-engineered in an event-based paradigm spanning sensors, data formats, algorithms, and hardware architecture.”